The acute:chronic workload ratio – science or religion?

One of the most well-received and now controversial additions to the sports-injury prevention literature in the past few years is the notion of the acute:chronic workload ratio (ACWR). The model of the ACWR came to the fore following a blockbuster paper by an Australian researcher published in 2016(1).

The paper proposed that coaches and trainers could predict injury risk by calculating the ratio between acute training loads (training performed over the past week) and chronic training loads (average training accumulated over a longer period – usually estimated at four weeks). The theory is that if the acute training load is too high in relation to the chronic training load, the athlete is doing much more training than usual, thus creating a ‘spike’ in training load. These training load spikes serve as warning signs of an increase in the risk of injury.

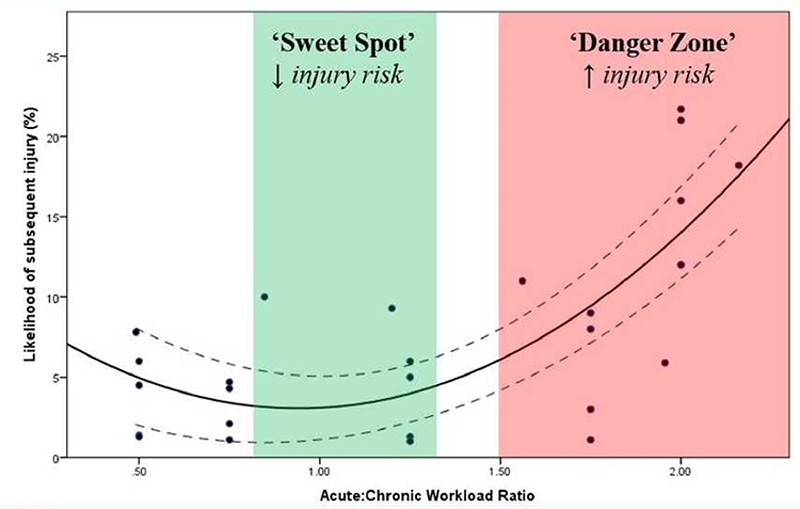

On the other hand, if the acute training load is much lower than the chronic training load, this represents a state of detraining. Unprepared for the demands of the sport they play, athletes may be at an increased risk of injury. Ultimately, the paper suggests that there is an ACWR sweet spot ranging from 0.8 and 1.3 within which athletes are at a much lower risk of injury (see figure 1).

Figure 1 – Relationship between the Acute:Chronic Workload Ratio (ACWR) and injury risk*(1)

*Image reproduced from Gabbett et al. The training-injury prevention paradox: should athletes be training smarter and harder?

The impact of this paper was fairly sensational. To date, the article has been cited 755 times and tweeted about 2289 times (altmetric.com). The International Olympic Committee has recommended managing athlete training loads using the ACWR(2). Due to the paper’s influence, sports scientists and clinicians around the world now measure daily training loads for every athlete in their care to ensure that they stay within the ‘sweet spot’.

What’s the problem?

The publication of the ACWR method led to widespread and rapid adoption across many sporting environments. However, as practitioners started using the measure daily, problems became apparent. It was challenging to incorporate different data streams (eg, video vs. GPS) and track players when they were in different environments (eg, international duty)(3). Researchers also noted that the ACWR calculation failed to account for the decay of fitness and the effects of fatigue over time. They argued that the most recent weeks’ worth of training has a much larger influence on current fatigue levels than training completed three weeks beforehand - yet the ACWR method treats all training loads across time as producing the same fatigue impact(4).The same researchers instead argued for the use of exponentially-weighted moving averages to calculate ACWR. This formula is undoubtedly a better method, but, unfortunately, not nearly as easy to calculate and apply. Other researchers noted that the ACWR calculation violates a mathematical rule regarding the construction of ratios(5). The ACWR formula suffers from something known as mathematical coupling, because the acute load is also represented within the chronic load (ie, factored in doubly), which in mathematical terms results in spurious outcomes. The presence of this coupling necessarily calls into question the validity of the research concluding that ACWR is a predictor of injury in the first place.

Since then, researchers have highlighted further problems with the ACWR - most notably that the studies establishing its efficacy were likely statistically underpowered (ie, did not include enough data/subjects to eliminate random errors)(6). The accumulation of problems with the ACWR method for determining injury risk has led some researchers to call for a retraction of the original ACWR paper(7). However, despite the apparent flaws in the ACWR method, the use of this measure is now widespread within professional sport. Two international consensus statements recommend its use for athlete monitoring(2,8). Moreover, sports scientists and clinicians continue to make use of the measure despite researcher recommendations to discontinue its use. How did we get to a place where intelligent industry professionals ignore evidence and embrace dogma instead?

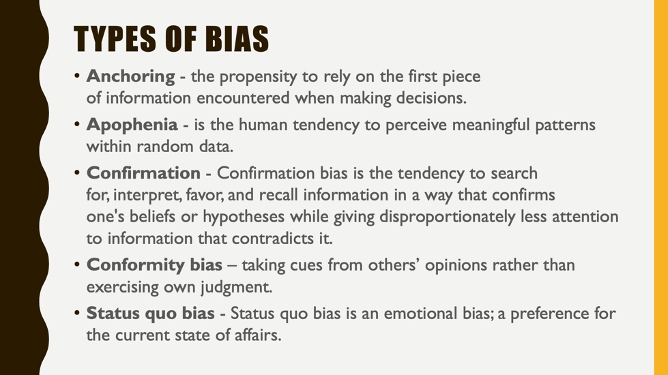

Appealing to bias

The answer may be that the ACWR method appeals to a number of our most powerful biases (see figure 2). Bias is a tendency to prefer one person or thing to another, and to favor that person or entity without logical reason. The fact is that whether it was scientifically validated or not, the ACWR method is a very appealing idea for sports professionals. Before the emergence of the ACWR, solid evidence showed that high training loads increased an athlete’s risk of injury(9). As a result, sports clinicians discouraged high training loads to avoid injury.But this default position of doing less was unpopular amongst many coaches and athletes, and casted the sports medicine practitioner as the training-load police. The emergence of ACWR provided a common language for clinicians and coaches, and a way to measure an athlete’s overall activity in relation to injury risk. Training load monitoring no longer focused on reducing load, but instead how to safely manage athletes towards heavier loads. All involved had the understanding that high training loads were acceptable, as long as they were reached progressively over time.

Figure 2: Types of bias that may have influenced the rapid adoption of the ACWR

Under these circumstances, practitioners who eagerly adopted the new ACWR paradigm could have been influenced by various biases. The premise of the ACWR method is that athletes can and should train hard, provided they adopt reasonable progressions towards these high training loads. As a concept, this aligns neatly with the expectation of athletes as able to perform at a more intense level than regular people. Thus sports professionals possibly sanctioned the use of the ACWR concept because of their confirmation bias - even though the data didn’t prove this point. Because of bias, the ACWR concept has more resonance than the data supporting it.

Other types of bias that likely influenced the uptake of the ACWR concept are anchoring (ideas once believed are hard to refute), and apophenia (the tendency to attribute injuries to ACWR measures even if they are unrelated). Finally, despite mounting evidence that contradicts the validity of this measure, practitioners around the world continue to measure activity and design programs according to ACWR. The persistent use of the ACWR reveals both conformity bias (everyone is doing it) and a status quo bias (a reluctance to go back to the way things were). The result is an entire industry of professionals using a measure with weak scientific evidence to support its use, but a great deal of conceptual attachment to what the formula might represent.

Where to go from here?

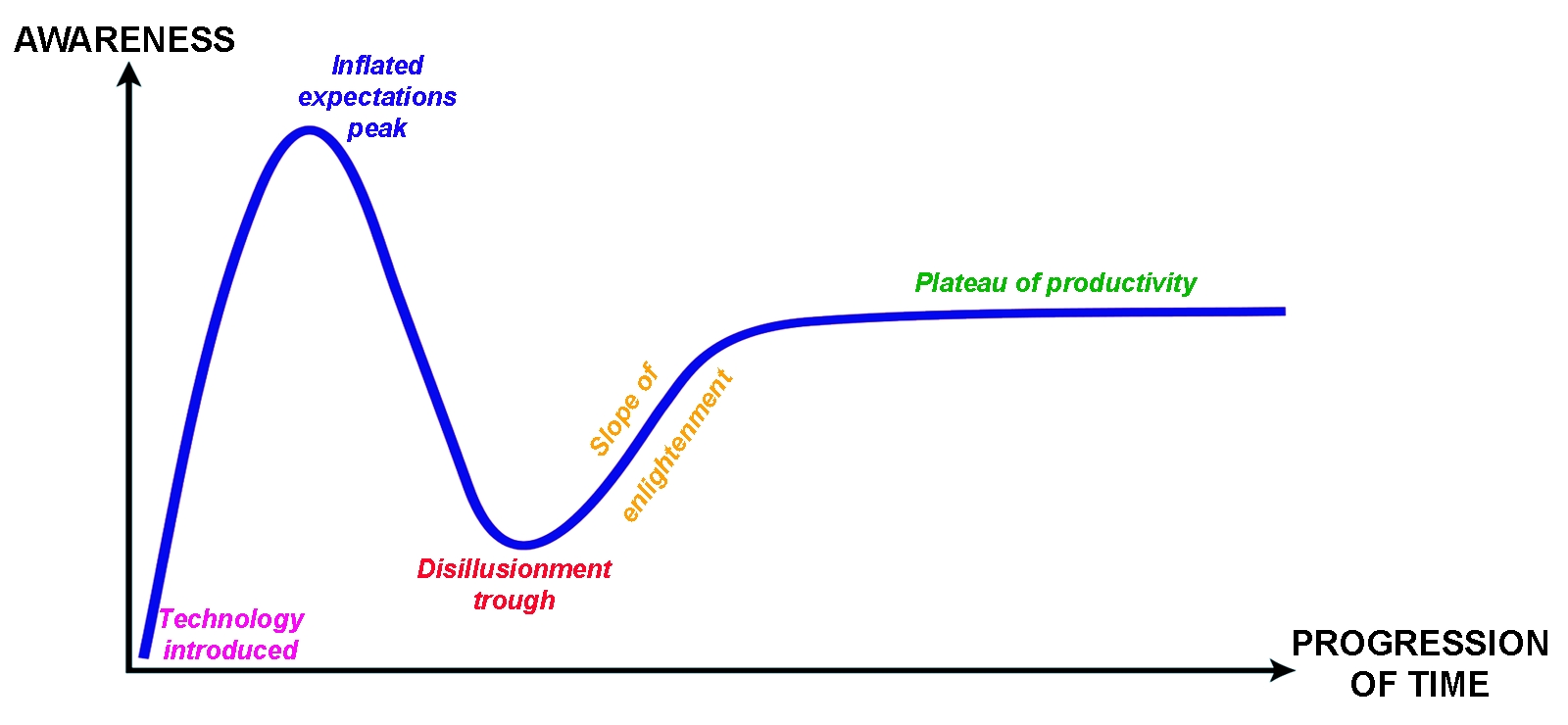

The life cycle of the ACWR concept, as described here, is similar to the ‘hype cycle’ (see figure 3) that usually occurs following the development of any new technology. Within the hype cycle, innovations transition through predictable stages from inception to widespread adoption. In the case of the ACWR, the initial research represents the technology trigger, while the widespread calls for adoption represent the peak of inflated expectations. Following sequentially, the concerns about the validity of the tool(4-7), are represented by the trough of disillusionment. Thus, it is not uncommon following an industry innovation to see widespread initial excitement, followed by despair that the technology did not work in the way developers imagined at the outset.Figure 3 – The Gartner Hype Cycle

The plot describes the typical stages of adoption of new technologies from conception to maturity.

Within technological development, the trough of disillusionment phase usually results in reworking and reimagining the technology, so it becomes useful in other more pragmatic ways, leading to slower but more sustained adoption. Once effectively and reliably integrated into several industries, the technology reaches the plateau of productivity.

So what’s next for the ACWR? It is clear that the tool does not work in the way that it was originally intended(4-7). To carry on using it in its current form means ignoring the evidence of its deficits in favor of adhering to an appealing concept. However, the widespread expert consensus – that previous training loads influence current training capacity, and that athletes should progress to the highest sustainable loads possible – can’t be overlooked.

Thus, there is a need to reimagine the tool so that it provides the information as originally envisioned, namely, the relationship between activity and injury. Researchers suggest that while the ratio between acute and chronic training loads is a spurious measure, both have independent and meaningful effects on injury risk(8). The way forward is, therefore, likely the inclusion of these factors in a more advanced predictive model.

While we wait for science to recalibrate and find a better way to model and predict the risk of injury in athletes, there is value in considering and managing the chronic and acute workloads of athletes. Common sense prevails – avoid massive jumps in training load, and don’t prescribe high volumes of training until your athletes can cope with these.

References

- Br J Sports Med. 2016 Jan; 50(5):273-80.

- Br J Sports Med. 2016 Aug; 50(17):1030-41.

- Br J Sports Med. 2016 Nov; 51(18):3125-27.

- Br J Sports Med. 2017 Jan;51(3):209-10.

- Br J Sports Med. 2019 Aug; 53(15):921-22

- Sports Med. 2020 Mar; doi:10.1007/s40279-020-01280-1

- SportRxiv. 2019 Jul; doi:10.31236/osf.io/gs8yu

- Int J Sports Physiol Perform. 2017 Apr;12(Suppl 2):S2161-S70.

- Sports Med. 2016 Jun;46(6):861-83.

You need to be logged in to continue reading.

Please register for limited access or take a 30-day risk-free trial of Sports Injury Bulletin to experience the full benefits of a subscription. TAKE A RISK-FREE TRIAL

TAKE A RISK-FREE TRIAL

Newsletter Sign Up

Subscriber Testimonials

Dr. Alexandra Fandetti-Robin, Back & Body Chiropractic

Elspeth Cowell MSCh DpodM SRCh HCPC reg

William Hunter, Nuffield Health

Newsletter Sign Up

Coaches Testimonials

Dr. Alexandra Fandetti-Robin, Back & Body Chiropractic

Elspeth Cowell MSCh DpodM SRCh HCPC reg

William Hunter, Nuffield Health

Be at the leading edge of sports injury management

Our international team of qualified experts (see above) spend hours poring over scores of technical journals and medical papers that even the most interested professionals don't have time to read.

For 17 years, we've helped hard-working physiotherapists and sports professionals like you, overwhelmed by the vast amount of new research, bring science to their treatment. Sports Injury Bulletin is the ideal resource for practitioners too busy to cull through all the monthly journals to find meaningful and applicable studies.

*includes 3 coaching manuals

Get Inspired

All the latest techniques and approaches

Sports Injury Bulletin brings together a worldwide panel of experts – including physiotherapists, doctors, researchers and sports scientists. Together we deliver everything you need to help your clients avoid – or recover as quickly as possible from – injuries.

We strip away the scientific jargon and deliver you easy-to-follow training exercises, nutrition tips, psychological strategies and recovery programmes and exercises in plain English.